20.5.2019 17:05 We are not dead

We are not dead; this page is just hard to update.

For up to date information please visit our social media sites which are accessible by

clicking the icons in the top right of the page.

We are not dead; this page is just hard to update.

For up to date information please visit our social media sites which are accessible by

clicking the icons in the top right of the page.

We have added our side project "Fractal" to the projects page.

Here are also two short videos from the project:

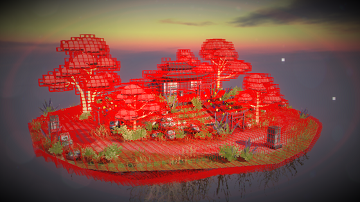

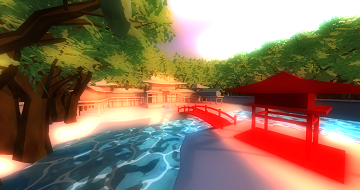

Check out the Rising Sun teaser we just uploaded:

The galleries on the "Projects" page have been updated!

Now you can take a look at some Rising Sun concept arts and some new Motor and BDPT screenshots!

It's time to release the first beta version of my image viewer.

It was developed using C++, OpenGL and my custom GUI framework.

The design of the application is subject to change. I didn't add any crazy animations and effects on purpose,

because it's designed to be a lightweight replacement for the Windows Photo Viewer (which has some really annoying bugs and issues and its multi-monitor support is just plainly broken.).

The tool supports linear interpolation, which was removed from the Windows Photo Viewer in newer versions.

Here are some of the features I want to add:

- Support for animated GIFs (the Windows XP version of the Windows Photo Viewer supported it, but it was dropped in newer versions...).

- Displaying of folders as thumbnails so you can click them to view their contents.

- Bicubic interpolation for better image magnification quality

- Unicode file path support

- Linux & Mac support

Supported image formats:

BMP, DDS, GIF, HDR, ICO, JPEG, PNG, Photoshop PSD, RAW, TARGA, TIFF and a many more.

It's possible to set it as your standard image viewing application.

I'd be happy to hear your feedback and if you find any bugs, tell me about them!

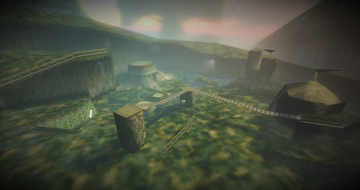

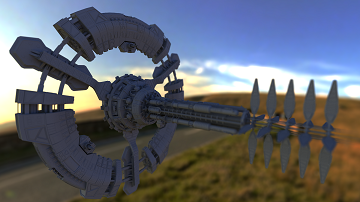

Here's a new screenshot of the game. Don't forget that it doesn't represent the quality of the final game.

A very early alpha version of the game is coming soon, so stay tuned!

The point of the alpha version is to show our work and to test the game and engine on a wide variety of systems, to ensure great performance and stability in the final version.

Currently implemented graphics effects and features:

- Oculus Rift support

- Soft dynamic shadows

- SSAO (Screen Space Ambient Occlusion)

- Adaptive high quality depth of field (without ugly bleeding artifacts)

- Per pixel lens flares

- Bloom

- Volumetric fog (a.k.a. god rays), but not the crappy screen space kind.

- Height based fog

- Linear space lighting (for a more correct image)

- Indirect lighting using light probes with varying roughness

- Decal rendering

- Particles with direct, indirect and volumetric lighting.

I want to explain now, how models are loaded into Motor.

Motor makes use of a file which I will call "XML_MODEL" from now on (because its class is called like that...).

The XML_MODEL file is an XML file used to abstract model files and their rendering options.

Here's an example of it (the structure and features are subject to change):

It basically tells the renderer how to render the model.

You can think of it as a wrapper around the model file, because the renderer never accesses any model attributes directly, but always through the XML_MODEL. Here's a very interesing part of it:

This part of the file tells the renderer to which render bucket each material (inside the model file) belongs to, so it can be properly rendered.

That's actually a good question for a change. :P

A render bucket is what we call a collection of meshes which share the same rendering options.

As already explained in "Motor's data-driven renderer (part 1)", the renderer consists of multiple user-definable render passes.

Each render pass of the type "PASS_GEOMETRY" references one or more render buckets (by name).

- The XML_MODEL assigns a render bucket to each material.

- The renderer collects all meshes in the scene and creates render buckets out of them.

- When a render pass is being run, it looks for the render buckets it depends on and renders their contents.

You can basically think of the whole thing as some kind of deferred renderer on geometry level.

This approach gives us the flexibility to completely customize the renderer for a game.

It also helps us to reduce the number of required shaders and therefore decreasing code complexity and increasing rendering performance.

Today I want to talk about the path finding in Motor.

Most games today use a navmesh approach.

But I say navmeshes suck for a lot of things.

Here's a nice video which shows you why.

Of course not all errors depicted in the video are caused solely by using navmeshes. Some are the result of a crappy implementation.

In my opinion, more developers should start looking for alternatives.

Why? Because there are so many problems you have to deal with. And most solutions to these problems are either not efficient, unflexible or dirty hacks.

Here's an example that shows you why navmeshes suck:

Navmeshes provide no information about how much free space there is above it.

Imagine you have some kind of huge NPC that needs to do path finding. It simply can't tell if the path it wants to take has a too low ceiling.

In the worst case it'll try to walk an impossible path and get stuck.

Another example:

You have a narrow door and a very wide NPC. The NPC won't be able to tell if the path (through the narrow door) is wide enough for it. That's why it will get stuck too.

Yet another example:

The problem with the flying NPC from the game "The Elder Scrolls IV: Oblivion" (as seen in the above-mentioned video at 3:32)

shows you exactly why navmeshes simply don't work for those kinds of NPCs. Flying NPCs don't move on the ground, which is where navmeshes are.

Since navmeshes are a 2D structure, there's simply not enough information to deal with flying NPCs properly.

Of course there are solutions to the problems I just mentioned:

- You simply don't generate navmeshes for areas with low ceilings or narrow paths.

- You generate multiple kinds of navmeshes (one for each type of NPC).

- You make flying NPCs fly only above the navmesh and additionally maybe cast some rays.

- You apply some other kind of other hack to get the job done.

As you can probably already tell, all of the above solutions either suck or are really inflexible.

And that's exactly why Motor uses a volumetric approach. Like for everything in Motor, we try to find an actual solution to a problem instead of working around it.

A volumetric approach can tell you exactly where an NPC can fit through and it can deal with flying NPCs perfectly.

It can even deal with NPCs that climb around on walls and ceilings, which gives you the ability to create unique and awesome NPCs!

We simply coarsely voxelize all the static collision geometry of the scene and use regular grid based path finding.

The grid will be replaced with an octree in the future (to save memory and improve accuracy for small colliders and NPCs).

While voxelizing the scene we classify each voxel. Is it part of the floor, a wall, the ceiling or is it simply empty?

It would also be possible to make dynamic objects occupy voxels, so other NPCs avoid those regions.

Today I want to talk about the data-driven renderer

of the Motor Engine.

Good question. :D

It means the renderer-pipeline is defined in data instead of code.

The order of the processes required to render a scene is entirely defined in XML (which references shaders, of course).

This makes it easy to adapt it at any time, without ever having to touch the engine's renderer code.

This also gives you great flexibility about the kind of effects you can implement.

You might want to use forward rendering in a mobile game (due to memory constraints of the low-end hardware), but at the same time you also want a high-quality deferred renderer for high-end PCs.

Motor lets you solve this problem by defining two entirely different renderers for all your different needs. You can even define multiple different renderers for the same game.

I have taken good care that the performance doesn't suffer from the data-driven approach.

After all, with Motor, I aimed to create an extremely fast game engine.

The performance impact of the data-drivenness is negligible.

There are also cases, where it can outperform regular, rigid renderers.

For example this is the case, if we have a special render pass, which only targets certain kinds of objects. If none of those are visible, the entire pass can be skipped, thus saving performance.

Of course, this check can be implemented in a "rigid" renderer too, but Motor can go way beyond that.

Here's an extremely simplified example:

Imagine you have dependencies between render passes. Like "bloom" depends on "blurring the scene", which depends on "render objects to screen".

Motor could let you skip all passes if there's nothing to render. This is alot harder to implement in a rigid renderer.

Not really.

More like the opposite.

But if you want to unleash the full potential of Motor, you need to know how to write shaders, so you can add new materials and effects.

In the future I might implement a material/shader editor.

Along with a couple of new projects (like the "Motor" Engine), we also improved the design of the website.

Improved, which means it still sucks. :D

Enjoy!

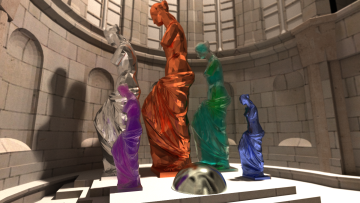

Here's another up to date screenshot (rendered with BDPT).

The scene contains:

- A model of the Sibenik cathedral (80'000+ Triangles)

- A tessellated sphere (15'000+ Triangles)

- Five instances of the Venus model (11'000+ Triangles)

It took way too long to render, because it was rendered with six bounces per pixel and because the rays got trapped inside the cathedral...

Here's an up to date screenshot (rendered with BDPT) of the Zenith space station model, which consists of more than 300'000 Triangles:

This site is still under construction!

All content was created by us!